Giant Chips Give Supercomputers a Run for Their Money

As large supercomputers keep getting larger, Sunnyvale, California-based Cerebras has been taking a different approach. Instead of connecting more and more GPUs together, the company has been squeezing as many processors as it can onto one giant wafer. The main advantage is in the interconnects—by wiring processors together on-chip, the wafer-scale chip bypasses many of the computational speed losses that come from many GPUs talking to each other, as well as losses from loading data to and from memory.

Now, Cerebras has flaunted the advantages of their wafer-scale chips in two separate but related results. First, the company demonstrated that its second generation wafer-scale engine, WSE-2, was significantly faster than world’s fastest supercomputer, Frontier, in molecular dynamics calculations—the field that underlies protein folding, modeling radiation damage in nuclear reactors, and other problems in material science. Second, in collaboration with machine learning model optimization company Neural Magic, Cerebras demonstrated that a sparse large language model could perform inference at one-third of the energy cost of a full model without losing any accuracy. Although the results are in vastly different fields, they were both possible because of the interconnects and fast memory access enabled by Cerebras’ hardware.

Speeding Through the Molecular World

“Imagine there’s a tailor and he can make a suit in a week,” says Cerebras CEO and co-founder Andrew Feldman. “He buys the neighboring tailor, and she can also make a suit in a week, but they can’t work together. Now, they can now make two suits in a week. But what they can’t do is make a suit in three and a half days.”

According to Feldman, GPUs are like tailors that can’t work together, at least when it comes to some problems in molecular dynamics. As you connect more and more GPUs, they can simulate more atoms at the same time, but they can’t simulate the same number of atoms more quickly.

Cerebras’ wafer-scale engine, however, scales in a fundamentally different way. Because the chips are not limited by interconnect bandwidth, they can communicate quickly, like two tailors collaborating perfectly to make a suit in three and a half days.

“It’s difficult to create materials that have the right properties, that have a long lifetime and sufficient strength and don’t break.” —Tomas Oppelstrup, Lawrence Livermore National Laboratory

To demonstrate this advantage, the team simulated 800,000 atoms interacting with each other, calculating the interactions in increments of one femtosecond at a time. Each step took just microseconds to compute on their hardware. Although that’s still 9 orders of magnitude slower than the actual interactions, it was also 179 times as fast as the Frontier supercomputer. The achievement effectively reduced a year’s worth of computation to just two days.

This work was done in collaboration with Sandia, Lawrence Livermore, and Los Alamos National Laboratories. Tomas Oppelstrup, staff scientist at Lawrence Livermore National Laboratory, says this advance makes it feasible to simulate molecular interactions that were previously inaccessible.

Oppelstrup says this will be particularly useful for understanding the longer-term stability of materials in extreme conditions. “When you build advanced machines that operate at high temperatures, like jet engines, nuclear reactors, or fusion reactors for energy production,” he says, “you need materials that can withstand these high temperatures and very harsh environments. It’s difficult to create materials that have the right properties, that have a long lifetime and sufficient strength and don’t break.” Being able to simulate the behavior of candidate materials for longer, Oppelstrup says, will be crucial to the material design and development process.

Ilya Sharapov, principal engineer at Cerebras, say the company is looking forward to extending applications of its wafer-scale engine to a larger class of problems, including molecular dynamics simulations of biological processes and simulations of airflow around cars or aircrafts.

Downsizing Large Language Models

As large language models (LLMs) are becoming more popular, the energy costs of using them are starting to overshadow the training costs—potentially by as much as a factor of ten in some estimates. “Inference is is the primary workload of AI today because everyone is using ChatGPT,” says James Wang, director of product marketing at Cerebras, “and it’s very expensive to run especially at scale.”

One way to reduce the energy cost (and speed) of inference is through sparsity—essentially, harnessing the power of zeros. LLMs are made up of huge numbers of parameters. The open-source Llama model used by Cerebras, for example, has 7 billion parameters. During inference, each of those parameters is used to crunch through the input data and spit out the output. If, however, a significant fraction of those parameters are zeros, they can be skipped during the calculation, saving both time and energy.

The problem is that skipping specific parameters is a difficult to do on a GPU. Reading from memory on a GPU is relatively slow, because they’re designed to read memory in chunks, which means taking in groups of parameters at a time. This doesn’t allow GPUs to skip zeros that are randomly interspersed in the parameter set. Cerebras CEO Feldman offered another analogy: “It’s equivalent to a shipper, only wanting to move stuff on pallets because they don’t want to examine each box. Memory bandwidth is the ability to examine each box to make sure it’s not empty. If it’s empty, set it aside and then not move it.”

“There’s a million cores in a very tight package, meaning that the cores have very low latency, high bandwidth interactions between them.” —Ilya Sharapov, Cerebras

Some GPUs are equipped for a particular kind of sparsity, called 2:4, where exactly two out of every four consecutively stored parameters are zeros. State-of-the-art GPUs have terabytes per second of memory bandwidth. The memory bandwidth of Cerebras’ WSE-2 is more than one thousand times as high, at 20 petabytes per second. This allows for harnessing unstructured sparsity, meaning the researchers can zero out parameters as needed, wherever in the model they happen to be, and check each one on the fly during a computation. “Our hardware is built right from day one to support unstructured sparsity,” Wang says.

Even with the appropriate hardware, zeroing out many of the model’s parameters results in a worse model. But the joint team from Neural Magic and Cerebras figured out a way to recover the full accuracy of the original model. After slashing 70 percent of the parameters to zero, the team performed two further phases of training to give the non-zero parameters a chance to compensate for the new zeros.

This extra training uses about 7 percent of the original training energy, and the companies found that they recover full model accuracy with this training. The smaller model takes one-third of the time and energy during inference as the original, full model. “What makes these novel applications possible in our hardware,” Sharapov says, “Is that there’s a million cores in a very tight package, meaning that the cores have very low latency, high bandwidth interactions between them.”

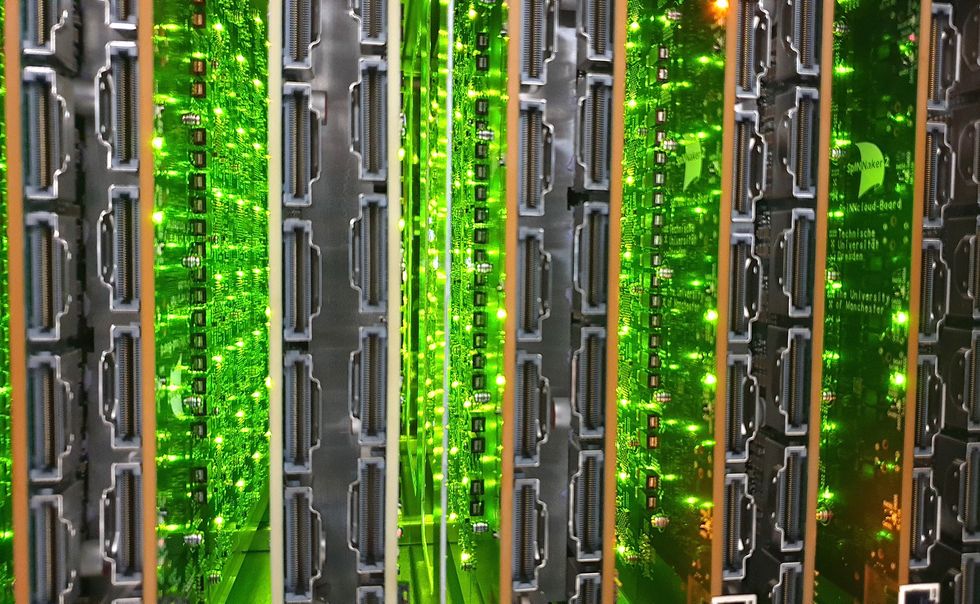

The SpiNNaker2 supercomputer has been designed to model up to 10 billion neurons, about one-tenth the number in the human brain. SpiNNCloud Systems

The SpiNNaker2 supercomputer has been designed to model up to 10 billion neurons, about one-tenth the number in the human brain. SpiNNCloud Systems